Everyone knows that chameleons can change colors. But chameleons have other special abilities: They have prehensile tails and toes that give them excellent mobility. They have sticky tongues to catch prey. And they are the only animal whose eyes can move independently, enabling them to look in two directions at once.

In the 20th century, World War II and the Cold War spurred on research and innovations to new and deadly heights. Globalization contributed to a growing pool of scientific talent. 20th century scientists were experts, scholars, leaders in their fields. They delved into their area of expertise, made new discoveries, and passed on the tools of science to the next generation.

Then the century turned. In the 23 years since, the way science is conducted has changed forever.

The Internet. Artificial intelligence. Climate change. Pandemics. Read on to explore how life has changed for the 21st century scientists—and why they need to be modern research chameleons in order to thrive.

How we got here: a rapidly evolving technological climate

Science has only taken its current form due to new and powerful technology: the internet, artificial intelligence, open source software, cloud computing, blockchain, social media, and more.

The Internet

The rise of the internet has arguably affected the science and research industries the most of any field. Just as the printing press revolutionized science hundreds of years ago by allowing scientific ideas to be widely disseminated, the internet transformed science by taking away barriers to publishing and communication.

In 2000, digital versions of more than 11 million research articles were available. As of 2018 that number was 114 million, and over 4 million new articles were published in 2021 alone.

Open Access and Cloud Computing

Open source and open access have been major factors powering new scientific discoveries. While definitions of open source vary, it generally means that software, research, or technology has free and unfettered access, distribution, and use redistribution. Movements advocating for open access research and software have ramped up since the turn of the century.

Thanks to open source, many important internet technologies benefitting science emerged. The majority of HTTPS websites use OpenSSL for internet security and cryptography. PHP, a popular scripting language ideal for web development, is estimated to be used by 79% of all websites. Open source servers and databases like Apache Hadoop and MySQL emerged for large-scale data analysis and computation. These new ways to handle data and share discoveries have promoted science’s increasing globalization, rapid dissemination of information, and sophisticated analyses.

Cloud computing technologies, a critical part of modern data science, also emerged. Cloud computing relies on technological developments like virtualization, which divides the hardware resources of a single computer into multiple virtual computers, and distributed computing, which uses multiple computers in a single network to solve more complex problems. Cloud computing provides on-demand delivery to applications, servers, and data storage.

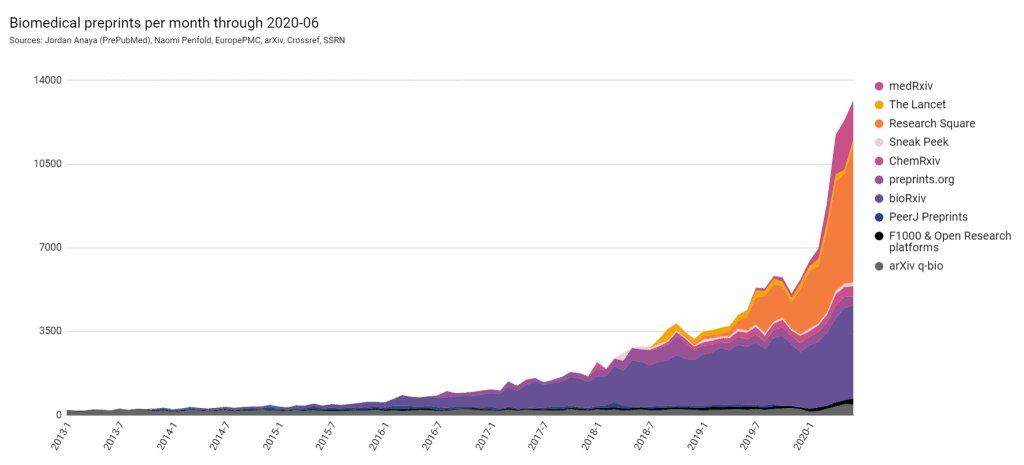

These technologies have resulted in drastic shifts in scientific publishing, including the emergence of countless new online scientific journals and open access research and publishing. The free dissemination of ideas enabled by open source servers and databases even led to preprints, or non-peer reviewed versions of scientific articles, becoming a major way to share research.

.webp?width=980&height=501&name=image%20(1).webp)

Artificial intelligence

Artificial intelligence has seen unprecedented growth since the millennia turned. Machine learning algorithms using immense datasets—especially deep learning—have improved AI outputs by leaps and bounds. The automated vacuum cleaner Roomba came out in 2002; Siri’s voice recognition technology launched in 2008, followed by Alexa in 2014. Self-driving cars hit the road in 2018; GPT4, released just this year, is currently being applied in the popular LLM-based chatbot ChatGPT.

Many of these advancements are powered by generative adversarial networks, or GANs. GANs involve reinforcement learning fueled by large-scale data and computing resources. Using billions of parameters, GANs have been used to improve neural network language models and image-processing.

Another important development—and the key tech behind ChatGPT—is the large language model (or LLM), a relatively recent advancement in deep machine learning. LLMs use massive datasets to learn the connections between words and phrases, built on training algorithms. LLMs are essentially neural networks with a transformer architecture, which tracks relationships in sequential data, i.e. words, phrases, and sentences. Many of these developments are extremely new—transformer models were first described in a 2017 research study.

AI technology has enabled numerous new approaches to science. There are knowledge engines and research alert services that help scientists and researchers find the right studies or coverage. Automated analyses, automated experiments, and even study-writing or study generation-support powered by ChatGPT are all current approaches thanks to AI.

Other notable technologies

Blockchain, best represented by the cryptocurrency Bitcoin that launched in 2009, is a decentralized, shared, and immutable digital list of transactions. It has enabled decentralized storage, cryptographic security, tamper-evident records of data, data backup and recovery, and more.

Developments in genomics have also led to the explosive growth of the field of biotechnology. The completion of the Human Genome Project (which even indirectly made the rapid COVID-19 vaccine development possible), minimally invasive surgery techniques, CRISPR gene-editing technology, and much more has encouraged this exciting field.

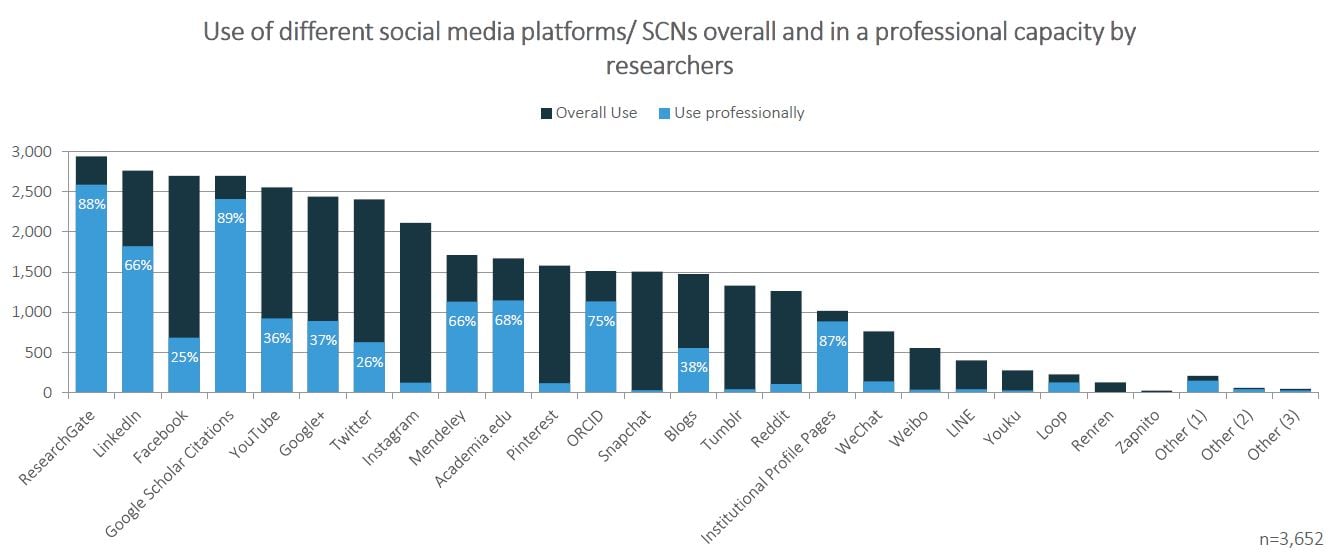

Lastly, the rise of social media has revolutionized the way information is shared, acquired, and discussed. LinkedIn launched in 2002, MySpace in 2003, Facebook in 2004, Reddit in 2005, Twitter in 2006: platform after platform emerged, providing researchers and scientists new ways to connect, discuss, and even conduct research.

The first quality of the 21st century scientist: Specialized

One of the most visible long-term trends in science has been increasing specialization.

Go back a hundred years and most scientists had diverse and varied study focuses. While today’s scientists maintain diverse interests, their area of expertise tends to be much narrower. Various studies and commentary have suggested that publishing and funding processes have a bias towards specialized studies. They also show that more and more research rely on interdisciplinary collaboration because of rising specialization.

Because of how much our knowledge has expanded, the expertise now needed to make discoveries at the cutting edge of a field requires a huge investment of time and effort. Some point to this as the reason for the slowdown in disruptive research. Others have suggested that policy is more to blame, as scientists are expected to produce a large volume of research, while constantly jumping through hoops to get grants that fund their research.

Leading scientific thinkers have warned repeatedly against over-specialization, because big, complex challenges call for an interdisciplinary approach. An interdisciplinary approach enables a more holistic understanding of the problem, integrates diverse expertise, fosters creativity, and helps turn theoretical research into applied results.

But in the 21st century, an "interdisciplinary approach" has tended to mean collaboration among larger teams of highly specialized scientists, rather than a small team of scientists with broader expertise.

The second quality of the 21st century scientist: Platform-savvy

While scientists have become more specialized in the 21st century, their technological and communication skills have broadened several times over to accommodate the wave of new platforms and tools made possible by the internet.

Workflow and research tools

These start with simple productivity tools, like software that highlights key words and phrases in research papers. There are workflow management and team collaboration tools, like Kanban boards, or writing tools optimized to handle charts, citations, and bibliographies.

Then there are more complex technologies, like computational data analysis and automated research software that use generative modeling or automated analyses.

For example, the rise of artificial intelligence has led to the reality of proactive research. This kind of research allows scientists to use software to find studies more efficiently, by finding and delivering high-quality studies to scientists automatically. These tools include specialized scientific search engines, research alert software, automated RSS feeds, and more.

.png?width=250&height=173&name=download%20(2).png)

.png?width=175&height=175&name=download%20(1).png)

EBSCO, JSTOR, Cengage, and NewsRx all have huge repositories of studies or research coverage. Wolfram|Alpha and MIT’s START can be used to answer specialized or technical queries with natural language processing. ChatGPT can formulate emails and replies, simplify complex topics, brainstorm ideas for grants or hypotheses, compose social media posts, and suggest relevant studies. On a more sophisticated level, GPT4 and other LLMs can pre-process data, classify texts, detect anomalies in datasets, and generate automated reports.

Social media

Social media has become another major part of the 21st century scientist’s toolkit. Social networks disseminate scientific knowledge, break down barriers, and foster a culture of collaboration. They also make research and scientific contributions more widely visible and accessible.

Research shows that individual scientists can benefit from having an online presence. Social media offers connections and a forum for feedback and discussion. The research of scientists with large online platforms tends to reach a broader audience. Some academic institutions, aware of the benefits of greater visibility for research and discoveries, are even starting to reward researchers for engaging on social media.

Of course, social media isn’t all good. Incorrect use can lead to disinformation. It puts scientists at risk of unwarranted criticism and vitriol. But it’s yet another platform and toolkit that has become essential to the work of the 21st century scientist.

The third quality of the 21st century scientist: Adaptable

Scientists need to have the ability to not just learn new research tools, but shift alongside evolving research and publishing processes.

For one, the open access movement has drastically changed the way the 21st century scientist publishes research and discovers new research.

From 1968 to 2009, scientific journal prices increased at three times the rate of inflation. The open access movement has fought to allow the greater public to access scientific research without cost. As a result, scientists are more likely to pay (via their institution) to publish research that is free for anyone to read, rather than to pay to read journals where research is published.

And while open access journals tend to lack the prestige of some traditional journals, even traditional publishers have started to increase open access offerings. (Here’s our guide on how to choose an open access journal.)

Benefits to open access include:

- The public benefits from seeing research

- Researchers can access more research at a lower cost while getting more recognition

- Scientists can more easily engage in collaboration and cross-disciplinary conversation

- Funders (including the US government) get more bang for their buck

- The pool of contributors gets bigger

The rise of preprints has created another new platform for research sharing and discovery. A preprint is a version of a scientific paper published in an accessible platform before formal review (peer-review).

Preprints, however, can have errors. This may lead to problems in the public sphere when the media does not differentiate between peer-reviewed papers and preprints. Still, even before coronavirus, preprints had been starting to gain traction. Benefits to preprints include:

- Instant dissemination and opportunity for feedback

- Preprints can be added to CVs to help early-career scientists with getting hired

- With over 60 platforms available today, there is a server for every niche and field

- Publicly available preprints also make it easier for scientists to find valuable information and ongoing discussions in their field

- Preprints provide an additional factor for research evaluation in hiring and funding

The coronavirus caused an impressive boost to preprint publishing due to the urgent need for information on the coronavirus. In between January and April 2020, over 6,700 COVID-19 related preprints were published—100 times faster than the speed of research response against the outbreak of Ebola and Zika in 2014 and 2015.

In addition, scientists need to be adaptable to career trends. The traditional pathway from PhD to tenured professor simply don't exist the same way it used to. Less tenured professor positions are available now than in the past. That means young scientists and post-docs need to adapt to more complicated hiring processes, consider alternative career paths, embrace hiring trends like diversity statements, and learn how to stand out in a highly competitive pool.

Subscribe for research news and tips

The fourth quality of the 21st century scientist: Borderless

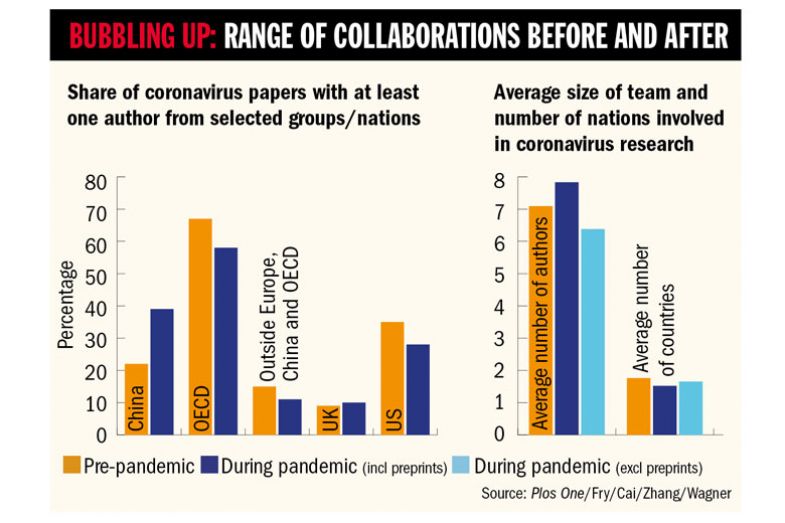

International collaboration in research has sharply increased since the 1990s. New communication technologies, the internet, and continuing development and globalization have all spurred this trend.

Countries such as Iran and Turkey have leapt above many European nations in terms of volume of scientific research published since 2000. And countries like Malaysia and Indonesia are producing well over 25 times that amount of scientific research they did twenty years ago. (For comparison, research volume in the U.S. has doubled since 2000, and grown about tenfold in China.)

International students make up large numbers of undergraduate and graduate degrees in U.S. science and engineering. Countries have started a bidding war to attract talented international students.

The research suggests that science benefits from these trends. The benefits of international collaboration include:

- Access to joint facilities and equipment

- Share more specialized technical skills

- Utilize unique sites and populations for studies

- Share costs and risks

- Address transnational and global issues

And those are simply the concrete, direct benefits to researchers. There are indirect benefits as well, such as economic ones. International scientific collaboration can increase scientific capacity in economies and allow access to foreign capital markets. Not to mention personal benefits to individual scientists—resume and reputation building, cultural experiences, and personal fulfillment, to name a few.

There are challenges to international collaboration in science. Time-zone differences, arranging for visas and permits for international work, and lack of funding can all pose barriers. But overseas collaborations have dramatically expanded the capacity for scientific innovation and discovery overall.

Areas in need of a push

Not everything in science has changed. The core tenets of the scientific process, like making hypotheses, testing them via experiments, and third-party retesting, remain by and large chief.

Not everything is moving in the right direction, either. Reproducibility has plummeted, the pace of new discoveries has slowed down, and data sharing remains stagnant. Science’s lack of diversity, which is known to boost creativity and innovation, has also held the field back. These issues are all areas of ongoing research investigations that may shed light on how to fix science’s biggest flaws.

To be sure, new scientific approaches have also emerged. Big data and machine learning have the potential to transform science. Drawing conclusions from trends observed by machine learning in big datasets, while transformative, remains controversial. Research also shows that advanced natural language generation technology like ChatGPT produces papers good enough to be published in scientific journals. At the same time, journals such as Science moved quickly to ban papers written by the AI.

One major way to improve science is through better science education. Paul Nurse at the Francis Crick Institute in London argues that PhD programs currently emphasize specialization too much, which results in students lacking exposure to wider areas of their subject. “Looking outside the immediate interests of a thesis project can lead to real creative advances,” he writes.

Other experts suggest that universities are slow to respond to emerging scientific disciplines like cell engineering and cybersecurity. These experts argue that institutions have to increase the number of STEM doctoral graduates in order to match the high demand for specialized technical knowledge in the contemporary job market.

.png?width=200&height=200&name=download%20(3).png)

Another suggestion from Robert Tjian at the Howard Hughes Medical Institute states that scientists need to have better people management skills. “Learning to manage teams and to work with others is going to become more important as science becomes more collaborative,” Tjian writes.

So just what is the 21st century scientist?

Intensely specialized and deeply knowledgeable in their field. Capable of smooth communication and integration with other scientists in diverse fields, from different countries, and via brand new technological platforms. An ability to quickly adapt to emergent trends in scientific research and publishing.

Specialized and yet adaptable. Equipped with unique talents, but also flexible. Capable of changing colors to match the environment. Highly mobile.

If need be, they can look in multiple directions at once. No doubt about it—the 21st century scientist is the ultimate modern research chameleon.